Project:

[wedge1]

↓

[ropfetch3]

↓

[ropfetch2]

↓

[partitionbyframe1]

↓

[imagemagick1]

↓

[waitforall1]

↓

[ffmpegencodevideo1]

↓

[drop_and_render]

Submissions:

├─ wedge1_to_imagemagick1

│ ├─ wedge1

│ ├─ ropfetch3

│ ├─ ropfetch2

│ ├─ partitionbyframe1

│ └─ imagemagick1

└─ waitforall1_to_ffmpegencodevideo1

├─ waitforall1

└─ ffmpegencodevideo1

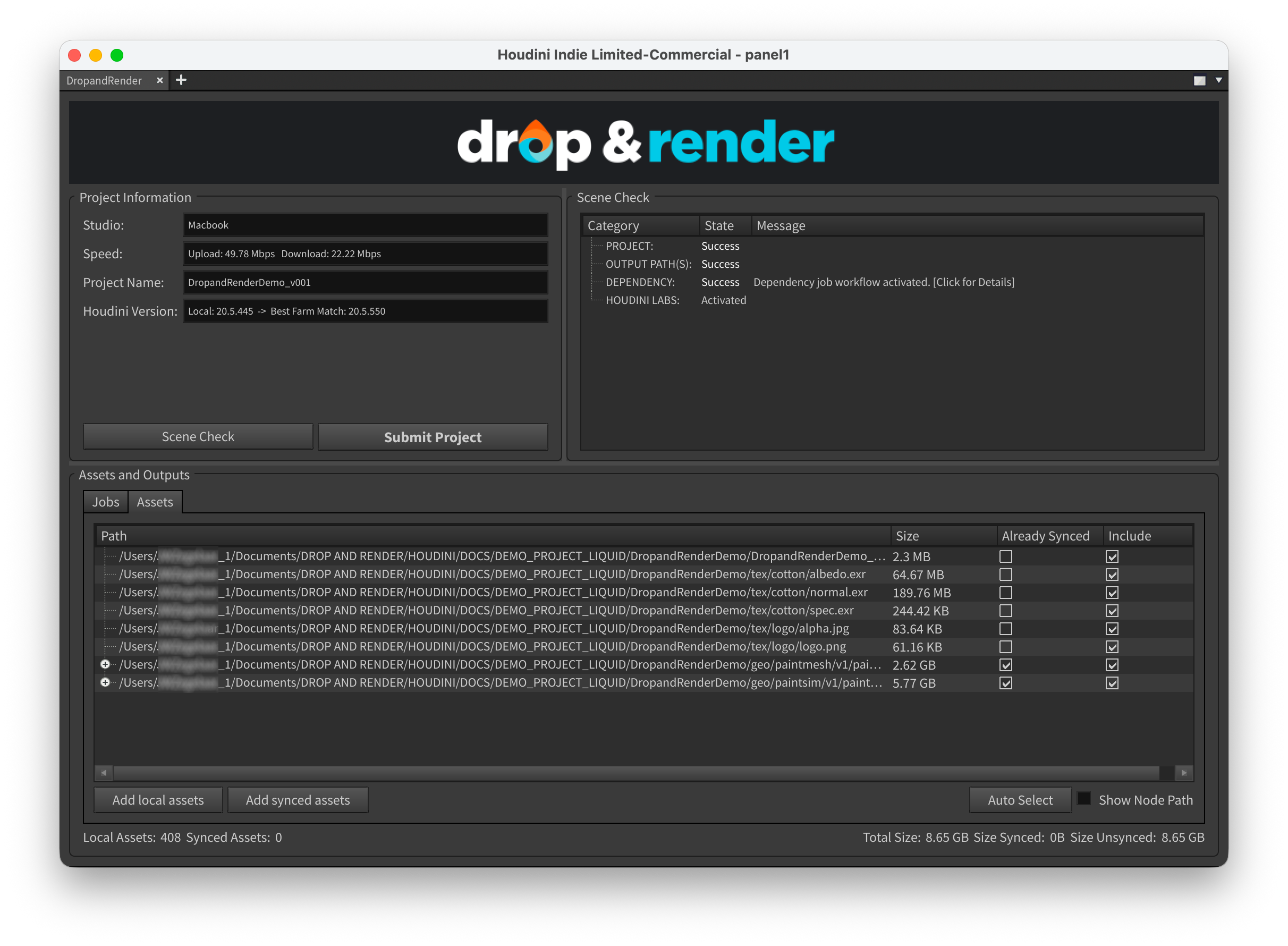

#### Configuring downloads

You can set downloads for entire sub-jobs as well as individual nodes. Changing the

sub-job setting will change all the nodes within. The downloads are all handled

using our standard systems, and each node's output will end up exactly where a local

cook would place it.

**Per-job downloads:**

```

├─ wedge1_to_imagemagick1 - Download: No

│ ├─ wedge1

│ ├─ ropfetch3

│ ├─ ropfetch2

│ ├─ partitionbyframe1

│ └─ imagemagick1

└─ waitforall1_to_ffmpegencodevideo1 - Download: Yes

├─ waitforall1

└─ ffmpegencodevideo1

```

**Per-node downloads:**

```

├─ wedge1_to_imagemagick1 - Download: No

│ ├─ wedge1

│ ├─ ropfetch3 - Download: Yes

│ ├─ ropfetch2

│ ├─ partitionbyframe1

│ └─ imagemagick1 - Download: Yes

└─ waitforall1_to_ffmpegencodevideo1 - Download: No

├─ waitforall1

└─ ffmpegencodevideo1 - Download: Yes

```

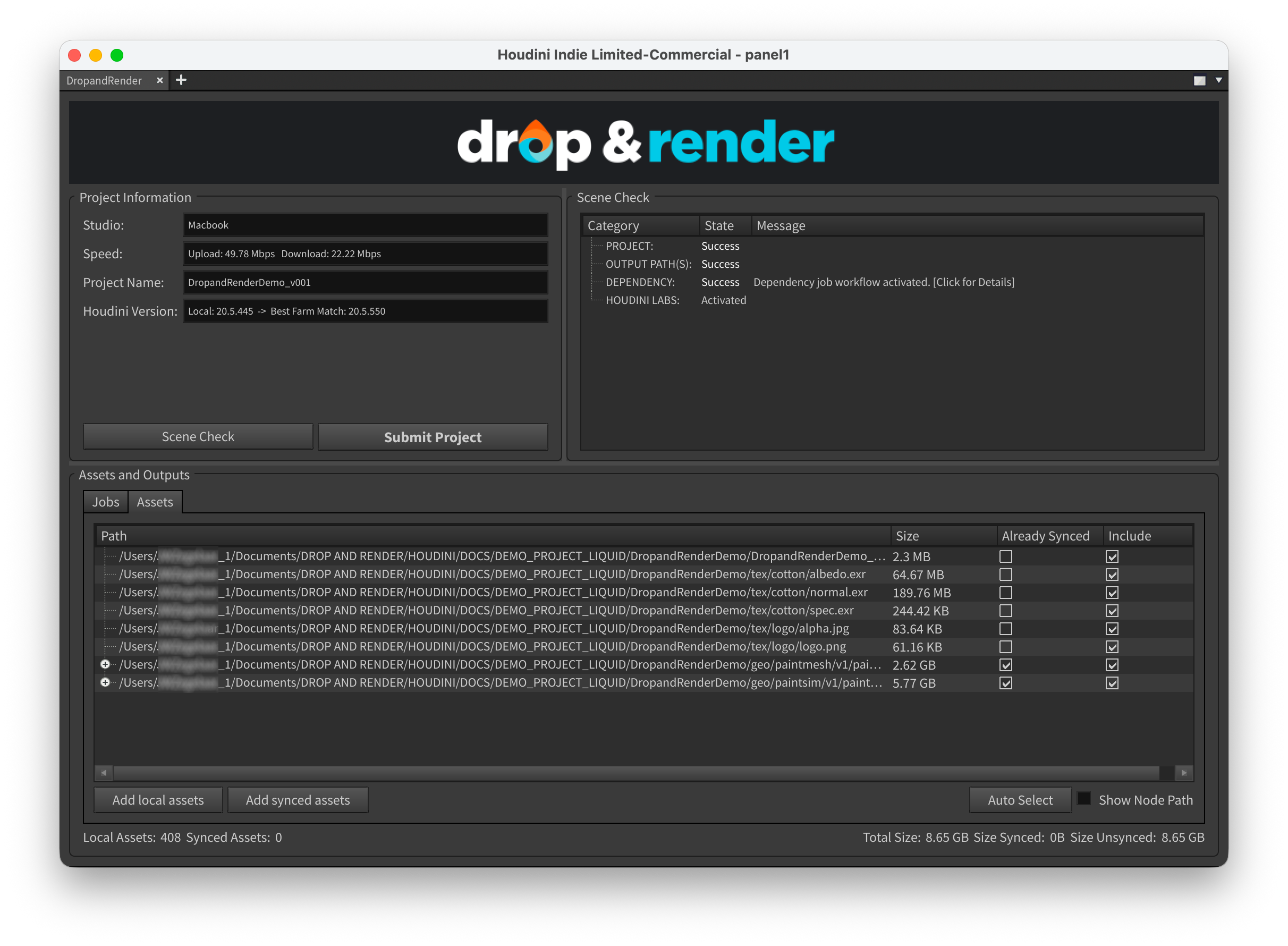

#### Cooking partial graphs

By connecting our node further up the chain you can cook smaller parts of your

full graph. You can use this to offload work that's hard to run but not frequent,

such as simulations, to the farm, while still keeping lighter tasks local. This

lets you get quicker results initially and iterate fast & locally later on.

Project:

[wedge1]

↓

[ropfetch3] → [drop_and_render]

↓

[ropfetch2]

↓

[partitionbyframe1]

↓

[imagemagick1]

↓

[waitforall1]

↓

[ffmpegencodevideo1]

Submissions:

└─ wedge1_to_imagemagick1

├─ wedge1

└─ ropfetch3